How Anonymous Peer Ratings Create Better Teams Than Coach Rankings

In professional sport, coaches rate players. They have video analysis, performance data, GPS tracking, heart rate monitors, and a full-time staff to help them evaluate talent. A Premier League manager can tell you a player's sprint distance to the metre and their pass completion rate to the decimal.

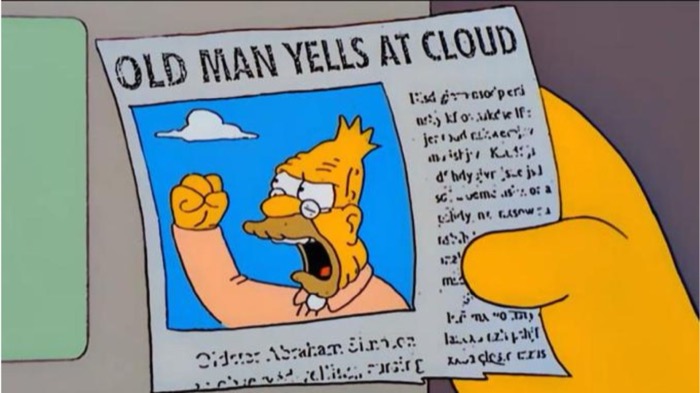

In your Tuesday night football group, you've got Dave's opinion.

Dave's a decent bloke. He organises the games, keeps track of who's coming, and usually ends up rating everyone when it's time to split teams. But Dave is one person with one set of eyes, one set of biases, and one perspective on what makes a good footballer. And the truth is, Dave's ratings are probably wrong — not because Dave is bad at football, but because no single person can accurately assess an entire group.

There's a better approach, and it's been hiding in plain sight: let everyone rate everyone. Anonymously.

The Problem With a Single Rater

When one person rates the whole group, several things go wrong at once.

Limited perspective. Dave plays midfield. He sees midfield interactions clearly — who passes well, who holds position, who tracks back. But he has a much hazier view of what the defenders are doing behind him or how the goalkeeper is reading the game. He's literally not watching most of the action on the pitch.

Personal bias. Dave likes players who play the way he thinks football should be played. If Dave values pace and directness, he'll overrate the quick wingers and underrate the methodical playmaker. If Dave prizes defensive solidity, the goalscorers get short-changed. Everyone has a mental model of "good football" and it skews their ratings.

Social pressure. If Dave's ratings aren't anonymous, he has to live with the consequences. He'll inflate the ratings of close friends because giving your mate a three out of ten creates awkward situations. He'll be reluctant to give anyone a genuinely low score because nobody wants to be the bad guy. The result is compressed ratings where everyone clusters between five and eight, making it nearly impossible to differentiate between players.

Recency bias. Dave remembers last week. Maybe the week before. He doesn't have a running mental database of how each player has performed across thirty games. His ratings are disproportionately influenced by recent performances, good or bad.

None of this makes Dave a bad person. It makes him a human being doing something humans aren't built for: objectively assessing a group of people they're personally involved with.

The Wisdom of Crowds

In 1906, the statistician Francis Galton observed something curious at a county fair. Visitors were asked to guess the weight of an ox. Individual guesses were all over the place — some wildly high, some absurdly low. But the average of all the guesses was almost perfectly accurate, coming within one pound of the ox's actual weight.

This phenomenon — later popularised as "the wisdom of crowds" — has been replicated across hundreds of studies and domains. The aggregate judgement of a group consistently outperforms individual expert assessments, provided certain conditions are met.

The conditions are:

Independence

Each person must form their opinion without knowing what others think. If everyone sees Dave's ratings before submitting their own, they'll anchor to Dave's numbers. Independence means fresh, uninfluenced judgements.

Diversity

The group needs different perspectives. In a football context, this happens naturally. Defenders see the game differently from attackers. The goalkeeper notices things nobody else does. Fast players evaluate pace differently from slow ones. Each rater brings a unique angle.

Decentralisation

No single rater's opinion should dominate. When every rating carries equal weight, individual biases cancel out. Dave's overrating of his mates is balanced by other players who see those mates more objectively.

When these conditions are met, the crowd's average converges on something close to the truth. Not perfectly — we're still talking about subjective football opinions — but far closer than any individual could get alone.

Why Anonymity Changes Everything

Take the same group of players and ask them to rate each other publicly, with names attached. Watch what happens.

Ratings cluster. Nobody wants to give a low score because the recipient will see it. Nobody wants to stand out with a harsh assessment. Social dynamics take over: you rate your friends generously, you rate people you don't know blandly, and you avoid rating anyone truly low because that creates conflict.

The result is noise. Compressed, socially filtered noise that tells you very little about actual skill differences.

Now make those same ratings anonymous. Nobody knows who rated who. The social pressure vanishes. And something interesting happens: people get honest.

Not cruel — honest. There's an important distinction. Anonymous raters don't suddenly become harsh. They become accurate. They feel free to give a genuinely good player a nine and a weaker player a four without worrying about the fallout. The spread of ratings widens, which means the data actually contains information.

Studies in organisational psychology show the same pattern. Anonymous 360-degree reviews in workplaces produce significantly more differentiated and accurate feedback than named reviews. People are kinder when they're being watched and more truthful when they're not. For the purposes of team balancing, truthful is what we need.

Position-Specific Knowledge

Here's something that doesn't get discussed enough: the people best qualified to rate a player are the ones who play the same position.

Your group's goalkeepers know more about goalkeeping than anyone else in the squad. They know the difference between a keeper who looks flashy making diving saves and one who positions well enough to never need them. They understand reading angles, commanding the box, and distribution. An outfield player watching from midfield might rate both keepers the same, but the keepers themselves can tell you exactly who's better and why.

The same applies across the pitch. Defenders rate other defenders more accurately because they understand the subtleties of the role. Strikers know which other attackers are genuinely dangerous and which ones just run around a lot.

When the whole group rates everyone, you get this distributed expertise built into the data. The aggregate rating for your goalkeeper includes input from the other keeper who understands the position intimately, the defenders who see the keeper's communication up close, the midfielders who notice distribution quality, and the attackers who know whether the keeper is hard to beat one-on-one. Each contributor adds a piece of the picture that no single rater could assemble alone.

Smoothing Out Outliers

Every group has someone with unusual opinions. Maybe one player genuinely believes that the worst player in the group is actually decent because they had one good game together last summer. Maybe another player has a personal grudge and consistently underrates someone.

With a single rater, these outliers distort everything. If Dave has a blind spot about one player, that blind spot goes straight into the team selection.

With multiple raters, outliers get diluted. If twelve people rate a player and eleven of them say he's a six, it doesn't matter that one person gave him a two. The average absorbs the outlier without breaking. The assessment remains accurate despite the anomalous input.

This is basic statistics, but it matters a lot in practice. It means the system is robust. One bad rating can't break it. One biased rater can't skew it. You don't need every single person to be perfectly objective — you just need enough raters that the noise cancels out.

The Food Critic Analogy

Think about how you choose a restaurant. You could read one professional food critic's review. The critic has expertise, training, and a refined palate. But their review is still one opinion. Maybe they went on an off night. Maybe the chef was having a bad day. Maybe the critic has a preference for French cuisine and the restaurant serves Thai.

Or you could check the aggregate rating on Google, TripAdvisor, or Yelp. Two hundred reviews from two hundred different people with two hundred different expectations. No individual review is expert-level. But the average? The average is remarkably reliable. A restaurant with a 4.5 across two hundred reviews is almost certainly good. The crowd's collective experience outweighs any single critic's assessment.

Player ratings in a sports group work the same way. Dave is your food critic. He might be right about most players, but his biases and blind spots are baked into every assessment. Peer ratings from the whole group are your two hundred reviews. Individually imperfect, collectively accurate.

This is why SquadBalance uses anonymous peer ratings as the foundation for team generation. Each player rates the others. Nobody sees individual ratings — only the admin sees the aggregated results. The ratings are used to produce balanced teams, and the teams speak for themselves. When the games are tight and competitive, people trust the system because they can see it working.

Getting Your Group to Actually Do It

The concept is easy to accept. Getting fifteen football players to actually sit down and rate each other is the practical challenge. Here's what works:

Keep it simple. A single overall rating per player on a one-to-ten scale. Don't ask for separate ratings on passing, shooting, defending, pace, and stamina — one number per player, done.

Make it fast. If the rating process takes more than three minutes, people won't bother. A link they can tap on their phone while waiting for the kettle to boil is the right level of effort.

Remind people it's anonymous. Nobody will know what you rated who. This removes the social barrier that stops people being honest.

Show the results through the games. When you generate teams using the ratings and the game ends 4-3, people notice. Close scorelines are all the validation the system needs.

One Rater vs. Many Raters

The comparison is clear when you lay it out:

A single rater gives you one perspective, shaped by personal bias, limited by their position on the pitch, compressed by social pressure, and anchored to recent memory.

Multiple anonymous raters give you the full picture — diverse perspectives, honest assessments, outlier resistance, and position-specific expertise. The aggregate is more accurate, more stable over time, and perceived as fairer by the group.

Your Tuesday night football group deserves better than one person's guess. Let the crowd decide.